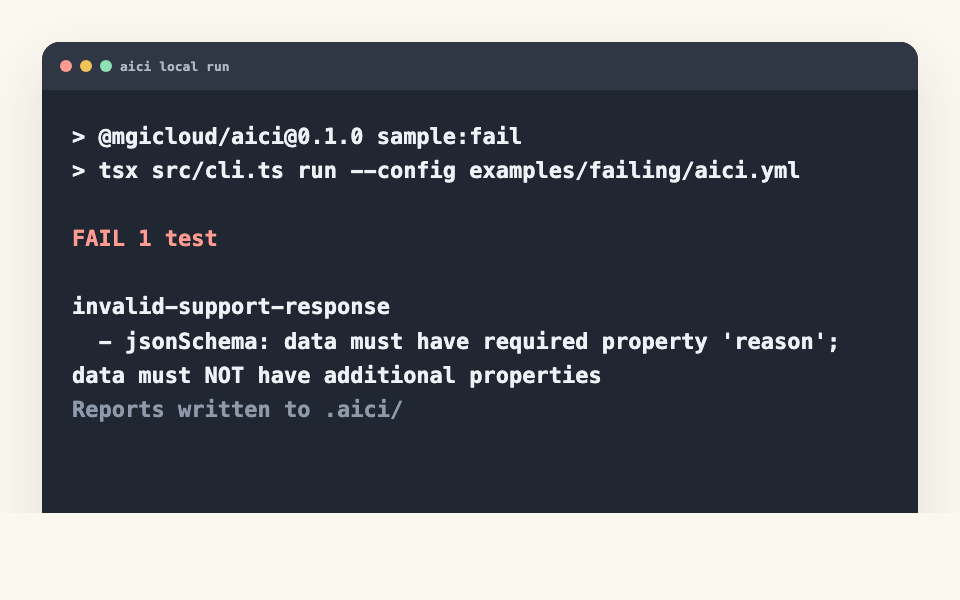

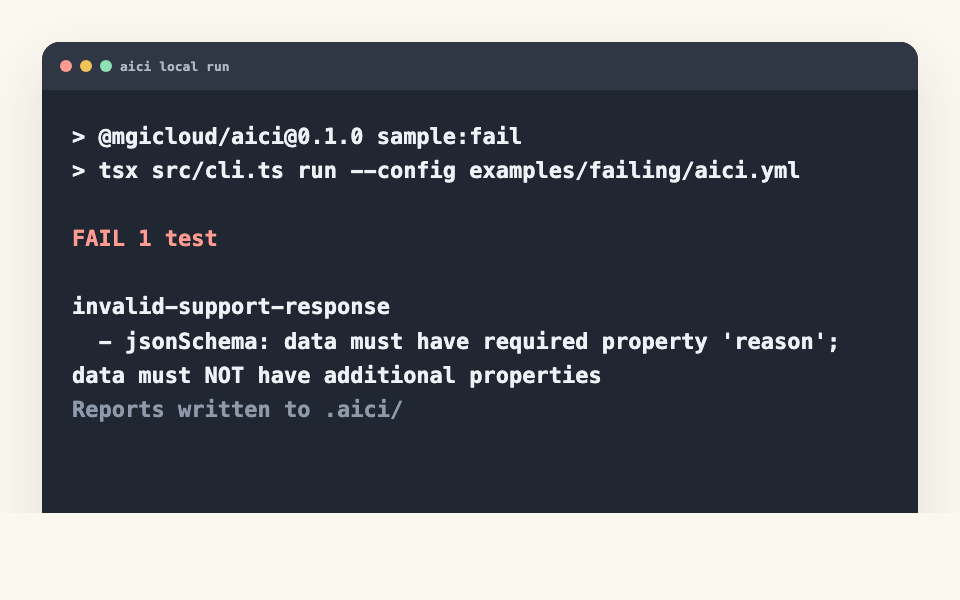

CLI fails locally

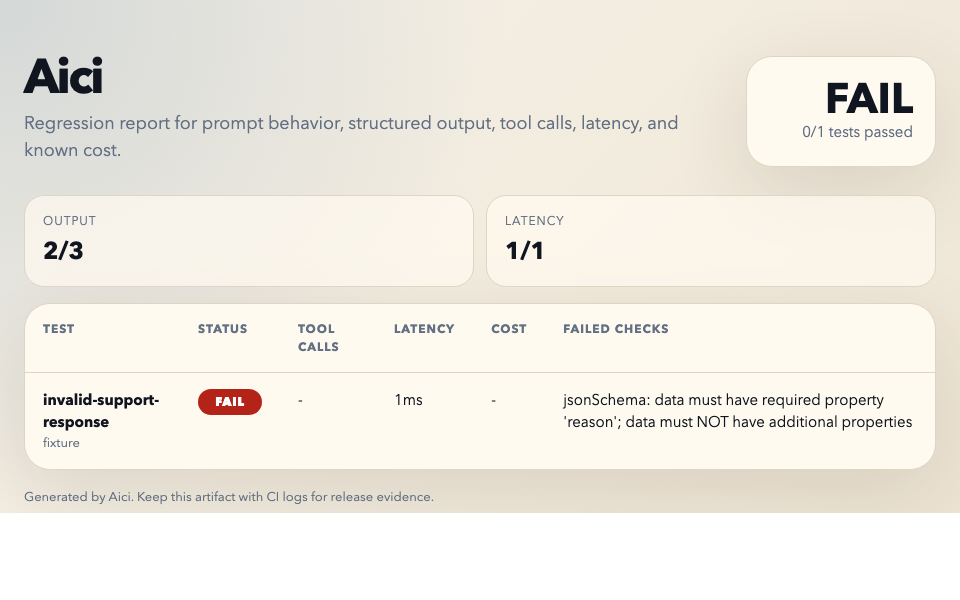

A real failing fixture run exits non-zero and shows the exact check that broke.

Aici is a local-first CLI and GitHub Action for prompt, JSON, tool-call, latency, and cost contracts in pull requests. No telemetry. No Aici backend. Live checks call only the provider endpoint your config declares.

npx @mgicloud/aici init --config aici.yml

Aici turns AI behavior into ordinary CI evidence. Try a change below to see the same workflow you would run in .github/workflows/aici.yml, but live on this page.

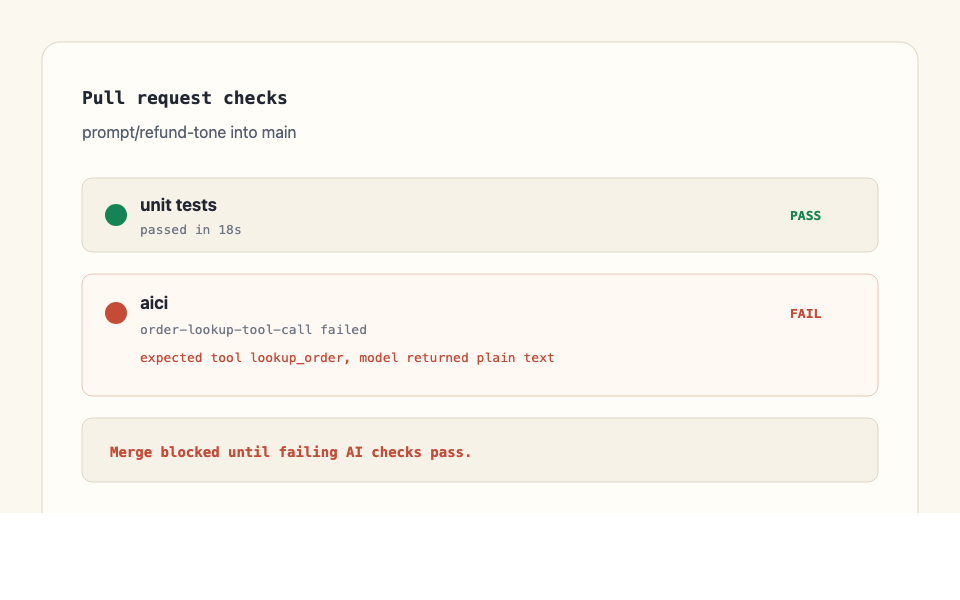

The launch page carries concrete product states: local CLI failure, pull-request failure, and the HTML report artifact a developer gets after a run.

A real failing fixture run exits non-zero and shows the exact check that broke.

The GitHub Action turns prompt regressions into normal pull-request evidence.

The same run writes Markdown, JSON, and HTML reports for review and CI artifacts.

Fixture tests catch contract regressions without provider spend, latency, or model flakiness. Live calls are best reserved for the few prompts where real model behavior is the thing under test.

Aici sits next to your test runner, not between you and your model. It can print the provider endpoints, dependencies, and source surface it relies on before live checks run.

The CLI, GitHub Action, YAML schema, examples, and templates are in one repo you can inspect and fork.

Aici does not send usage analytics or eval data to an Aici service. Fixture tests make no provider calls.

Live checks call OpenAI, Anthropic, or your OpenAI-compatible endpoint directly. Production traffic does not pass through Aici.

The CLI and Action are free. Hosted history may become paid later, but the release gate is not a fake free tier.

There is a README, npm package, GitHub Action, examples, and docs. You can evaluate it without a call.

Prove AI outputs still match the contract before merge. Hosted tracing, annotation, and generation are deliberately out of v1.

Delete aici.yml and the workflow file. No data export or offboarding email.

aici audit --json lists endpoints and dependencies. Fixture gates can run in Docker with --network none.

You can ship Aici in production CI without paying. The first paid offers should be setup, fixture design, CI hardening, and private trust packs, not a dashboard chasing a crowded category.

Initialize a config, commit the workflow, push a branch. The next time someone changes the prompt, the PR tells them what broke before users do.

npx @mgicloud/aici init --config aici.yml && npx @mgicloud/aici run --config aici.yml